While browsing the internet and looking for some new inspiration to build an own predictive model, I came upon a very interesting possible feature: the Brier score.

The Brier score is a possibility to measure the accuracy of a predictive model. It gets often used to measure the accuracy for weather forecasts. First I thought, I could use it as a kind of calibration feature for a predictive model. So that a predictive model recognizes, when it was too inaccurate in the past. But using it as a feature to detect teams, which can be predicted well by the bookies or which could cause unexpected results, seems to be a more promising approach. Therefor I want to explain in this post, how to calculate the Brier score based on the last betting odds for a specific team.

How to calculate the Brier score

The Brier score follows a basic rule: the lower the Brier score is, the better the prediction is. Normally it is used for binary outcomes and therefore has a value between 0 and 1. The formula to calculate the Brier score looks like this:

represents the forecasted probability.

represents whether the outcome happened (=1) or not (=0). If you use multiple number of forecasts (N), you have to calculate the mean.

An example for a simple binary forecast is the prediction, whether it rains on a given day or not:

- If the forecast is 100% and it rains – the Brier score is 0

- If the forecast is 70% and it rains – the Brier score is (0.7 – 1)^2 = 0.09

- if the forecast is 30% and it rains – the Brier score is (0.3 – 1)^2 = 0.49

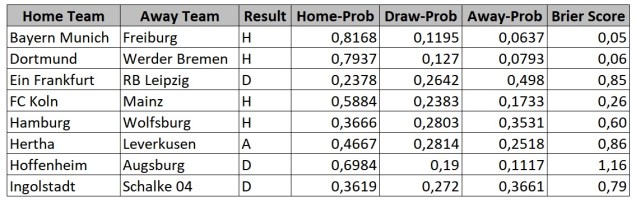

Of course forecasting a football match is not a binary prediction. There are 3 possible outcomes. Because of this, the resulting Brier score has a value between 0 and 2. Following table shows the Brier scores of all matches at the last matchday at German Bundesliga 2016/17:

The probabilities for different results correspond to the Bet365 odds without the bookie margin. As you can see, the match between Bayern Munich and Freiburg ended as expected. The resulting Brier score is very low:

(0.8168 – 1)^2 + (0.1195 – 0)^2 + (0,0637 – 0)^2 = 0.05

The match between Hoffenheim and Augsburg shows the exact opposite:

(0.6984 – 0)^2 + (0.19 – 1)^2 + (0.1117 – 0)^2 = 1.16

The Brier score is very high, so we can clearly say, that this result was a surprise. The same applies to the matches Eintracht Frankfurt – RB Leipzig, Hertha – Leverkusen and Ingolstadt – Schalke. Generally you can define, that a Brier score greater than 0,67 represents an unexpected result. This can be explained by a football match with even probabilities. If each possible result got a possibility of 33%, the resulting Brier score would be 0.67 regardless of the outcome.

Brier score for binary football prediction

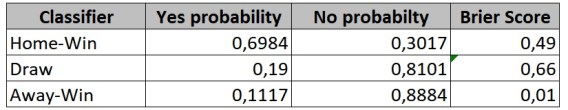

If you already did look more closely at different machine learning algorithms, you should have recognized, that not every machine learning algorithm is suitable for a multi-class prediction. E.g. a SVM (Support Vector Machine) supports only binary classification. Because of this, a football prediction must be transformed into multiple binary classifications. You can do this by using the one-vs-all strategy and build a single classifier for each possible outcome of a football match. But what happens, with the 3-class Brier score?

Let’s again take a look at the match between Hoffenheim and Augsburg. Transforming the prediction into a binary classification would result in 3 different classifiers for “home-win/no-home-win”, “draw/no-draw” and “away-win/no-away-win”. What would be the Brier score for the away classifier? The calculated Brier score 1.16 is of course wrong as the result “no-away-win” was obviously – with a probability of 89% – no surprise. So the Brier score has to be calculated for each single classifier. Following table shows the Brier scores for the different binary classifiers for this match:

Update (30.04.2020):

I missed to mention, that the calculation for a binary Brier score can be done more simple.

It’s just: (f – o)²

So for the home-win classifier it’s (0.3017 – 1)²

(see rain example at: https://en.wikipedia.org/wiki/Brier_score)

Implement Brier score feature

Now you know, how to calculate the Brier score and that you have to calculate it for a 3-class and a binary classification. And just like for the other features you have to separate between the home and the away statistic. The corresponding satellite table for the historic matches looks like this:

GitHub – Brier score satellite for historic matches

The satellite table calculates the Brier score for the home- (lines 18-78) and the away-team (lines 82 – 142). I used the Pinnacle odds (e.g. lines 31-33) as the base for the Brier score calculation. There is a simple reason for that: Pinnacle is known to have the sharpest odds. Of course the calculation could be done with the odds of every other bookie. The number of matches, which should be used for the calculation, is set to 5 games. Based on tests with predictive models, you have to determine, which value is the best. In every example I saw, I relatively small number of games was used, to calculate the average Brier score.

Problems of the Brier score feature

One problem of such a Brier score calculation should be kept in mind: the small sample size! In the example implementation I have used a sample size of 5 home or away games. With such a small sample size it could of course happen, that e.g. 4 matches of team have a surprising outcome. Something like this should not be interpreted as and evidence, that the team is over- or underestimated by the bookie. This could be just pure luck or bad luck. This can be shown by using a Monte Carlo simulation for the sample size. Whether the Brier score feature can be used as an indicator, whether a team performs very constantly or whether unpredictable results could be expected, has to be proofed by simulating predictive models with and without the feature.

Update (31.08.2017):

Now Bet365 odds instead of Pinnacle odds are used to calculate Brier score, as the Pinnacle odds are not available for older seasons.

If you have further questions, feel free to leave a comment or contact me @Mo_Nbg.

In Home-win you have 0,49 Brier score. Maybe you can explain? I got 0,18

(0,6984-1)^2+(0.3017-0)^2 = 0.18. Why?

LikeLike

Hi 🙂

thanks for the question.

1) The result of the match was not a home win, that’s why 0.3017 was the probability for the actual outcome.

2) For a binary classifier, the calculation of the Brier score is more simple:

(f – o)²

see the rain example at: https://en.wikipedia.org/wiki/Brier_score

(Just recognized, that this explanation is missing and I should at this to prevent missunderstandings)

So you got:

(0.3017 – 1)² = 0.49

Hope that helps 🙂

LikeLike

Thank you 🙂

LikeLike